How Monte Carlo forecasting and risk-adjusted probability modelling help leaders make better decisions under uncertainty

Pharmaceutical strategy is full of uncomfortable truths. Your best opportunity might be a molecule that fails late. Your most important launch forecast might be wrong for reasons that have nothing to do with the brand team. Your budget might be “approved” but your real constraint is time, stakeholder attention, manufacturing capacity, or payer acceptability.

In that environment, the organisations that win are not the ones with the most confident point forecasts. They are the ones that can quantify uncertainty, stress test assumptions, and allocate resources to the decisions that matter most.

That is the practical promise of advanced analytics and simulation modelling in pharma: moving from “what do we think will happen?” to “what range of outcomes should we plan for, what drives that range, and what actions shift the odds?”

Regulators are also moving in the same direction. The FDA’s Model-Informed Drug Development (MIDD) Paired Meeting Program is explicitly designed to advance the use of quantitative models derived from preclinical and clinical data to support development and regulatory decision-making. The European Medicines Agency (EMA) likewise publishes Q&As and guidance material on modelling and simulation, including specific guidance for PBPK (physiologically based pharmacokinetic) modelling reports.

This post is a field guide to how advanced analytics and simulation are actually used in commercial, medical, and market access contexts, with a focus on two high-leverage techniques:

- Monte Carlo forecasting (a disciplined way to forecast by simulating thousands of plausible futures)

- Risk-adjusted probability modelling (combining uncertainty with probabilities of success and key risks to produce decision-ready forecasts)

Why simulation beats single-number forecasts in pharma

Pharma forecasting has a unique shape of uncertainty:

- Heavy tails: rare events (a safety signal, a competitor label, a PBAC restriction) can dominate outcomes.

- Step changes: events occur at discrete points (readouts, submissions, approvals, listings).

- Interdependence: demand is coupled to diagnostics, capacity, channel, and behaviour.

When you compress all of that into a single number (or even a high/low range), you lose the logic that leaders need to act. Simulation gives you back three things that matter:

- A distribution, not a point: credible outcome ranges and their likelihoods.

- Attribution: which assumptions drive the variance (what to validate first).

- Decision impact: what interventions change the odds or reduce downside.

This mindset is well aligned with health economics and HTA, where quantifying parameter uncertainty via probabilistic sensitivity analysis (often using Monte Carlo simulation) is a foundational practice.

A quick map of simulation in the pharma lifecycle

Simulation is not a single method. It is a family of approaches applied at different points in the value chain.

1) R&D and clinical development

- Clinical trial simulation to optimise endpoints, sample size, enrichment, dose, and decision rules.

- PK/PD and PBPK modelling to predict exposure and inform dosing in specific populations.

- Model-informed drug development to integrate evidence and support regulatory discussions.

- Budget impact simulations that incorporate uptake uncertainty, persistence, and eligibility ranges.

2) Commercial strategy and forecasting

- Monte Carlo revenue forecasting incorporating uptake curves, competitive shocks, pricing, channel mix, and adherence.

- Risk-adjusted forecasts that incorporate probability of technical and regulatory success, and scenario likelihoods (for example, approval timing and restriction risk).

- Operational simulations for supply chain resilience and service capacity.

This post focuses on the commercial and market access end, but the logic is consistent across the lifecycle: make uncertainty explicit, simulate it, then act on what the simulation says is driving risk and opportunity.

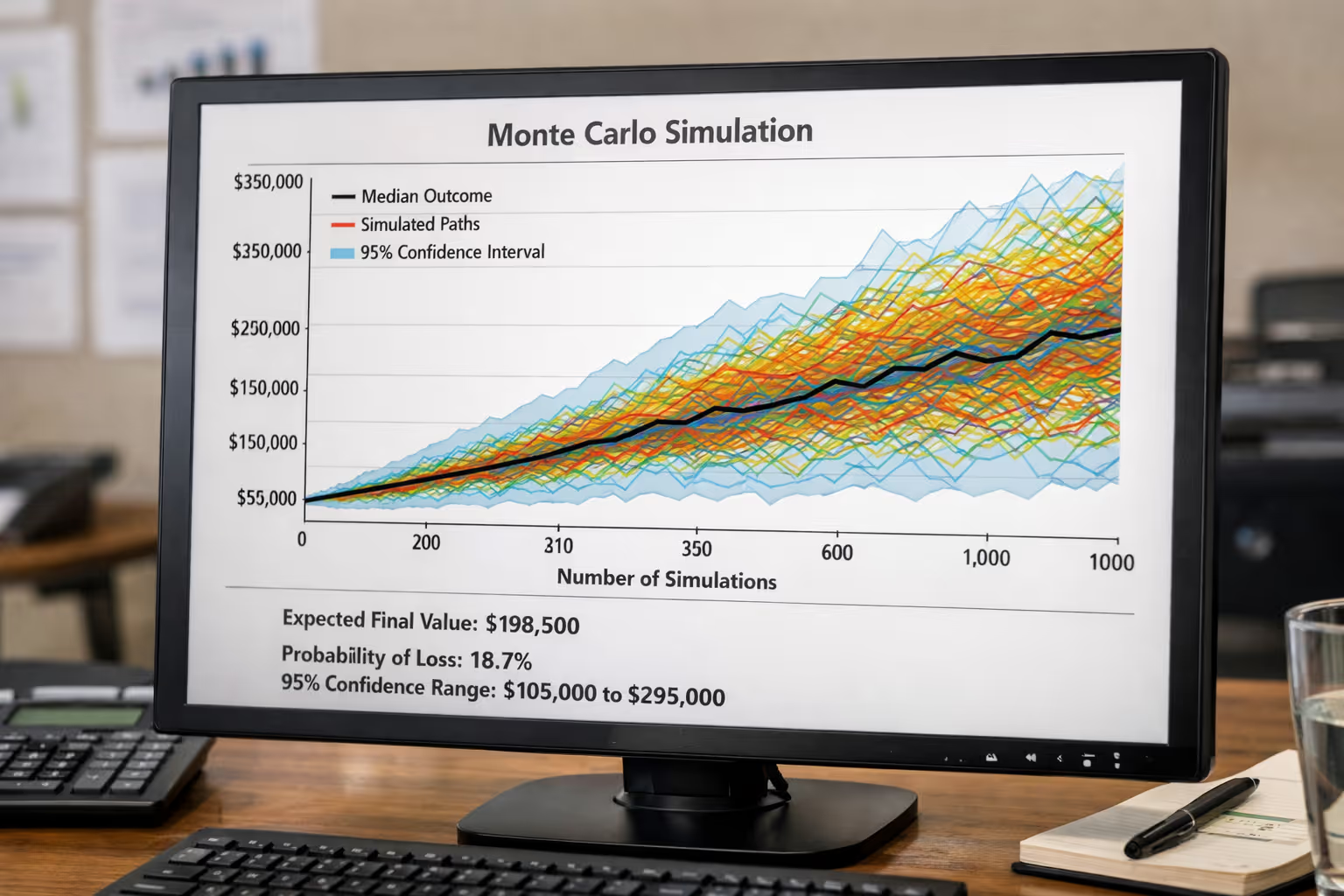

Monte Carlo forecasting, explained like you will actually use it

A standard forecast usually looks like:

Revenue = Eligible patients × Share × Price × Duration

Monte Carlo forecasting keeps the same structure, but treats key inputs as distributions rather than fixed values. Then it draws random samples from those distributions and recalculates the outcome thousands of times.

You end up with a probability distribution of revenue, not a single line.

Step 1: Choose the “few variables that matter”

Most forecasts do not fail because someone mis-typed the price. They fail because of a handful of assumptions that were treated as certain.

Typical high-impact variables in pharma commercial forecasting include:

- Size of treatable population (and how quickly it expands)

- Speed of adoption (especially around guideline changes)

- Persistence and discontinuation

- Competitive entry timing and differentiation

- Net price and channel mix

- Listing timing and restrictions (payer behaviour is often the biggest swing factor)

Step 2: Assign distributions, not guesses

Instead of “market share will be 12%”, you specify something like:

- Market share at year 2: triangular distribution (min 6%, mode 12%, max 18%)

- Time to PBS listing: discrete distribution (for example, 40% in 12 months, 40% in 18 months, 20% in 24 months)

- Discontinuation at 6 months: beta distribution (reflecting rates and sample size uncertainty)

This is the intellectual discipline. It forces teams to say: what would have to be true for the downside to happen, and how likely is that?

Step 3: Run the simulation (10,000+ iterations is common)

Each iteration samples a value for each variable and computes revenue. After thousands of iterations you can answer:

- What is the P50 (median) forecast?

- What is the P10–P90 range (credible planning range)?

- What is the probability revenue is below the level needed to justify investment?

- Which variables explain most variance (sensitivity)?

This approach is directly analogous to probabilistic sensitivity analysis used in health economics, where Monte Carlo sampling is used to propagate parameter uncertainty through a model.

Step 4: Diagnose drivers using sensitivity, not opinions

Two practical tools matter here:

- Tornado charts (or regression-based sensitivity) to show what drives variance

- Value of information thinking: validate the variables that reduce uncertainty the most (an idea strongly associated with modern modelling good research practices).

If your simulation shows that “time to listing” explains 45% of outcome variance, your next action is not polishing a brand narrative. It is tightening the evidence plan, the payer story, and the stakeholder pathway that influences listing timing.

Risk-adjusted probability modelling: turning uncertainty into decisions

Monte Carlo gives you outcome ranges. Risk-adjusted probability modelling goes one step further: it changes forecasts based on the likelihood that key events occur.

In pharma, there are two common layers:

Layer 1: Probability of success (PoS) gates

For pipeline assets, leaders often apply:

- Probability of technical success (clinical efficacy and safety)

- Probability of regulatory success (approval)

- Probability of reimbursement success (listing and restriction outcome)

A simple version is:

Risk-adjusted NPV (rNPV) = Σ [Cash flow_t × PoS_stage × discount factor_t]

This is powerful, but incomplete on its own, because it usually assumes a single cash flow line per stage. Real decisions require combining PoS with the outcome distribution.

Layer 2: Joint simulation of PoS and commercial uncertainty

A more decision-ready approach is to simulate both:

- Event outcomes (approval yes/no, listing timing, restriction scenario)

- Commercial variables (adoption, share, price, persistence)

That produces a distribution of outcomes where many iterations are “zero revenue” (failure or no listing), and the successes have their own range.

That is what executives actually need: the probability-weighted distribution.

This is also conceptually aligned with how modelling and simulation are used to support benefit–risk considerations and trial design decisions in regulatory contexts.

A practical example: two forecasts that look the same but mean different things

Imagine two assets both show “A$120m at Year 5” on a slide.

- Asset A: narrow distribution, high PoS, moderate upside

- Asset B: very wide distribution, lower PoS, extreme upside tail

If you only look at the point estimate, they look identical. In reality:

- Asset A is easier to resource and plan.

- Asset B might be the better portfolio bet, but needs options thinking (staged investment, kill criteria, early evidence).

Monte Carlo + risk adjustment separates these cases cleanly. It helps you decide whether you should:

- invest early to reduce uncertainty (generate evidence), or

- constrain exposure (stage gates, partnerships), or

- lean in (because upside tail justifies risk)

Where Monte Carlo forecasting fits best in pharma commercial work

1) Launch planning under payer uncertainty

If listing timing and restriction shape most outcomes, simulation gives you:

- probability-weighted revenue by year

- downside planning (cash and resourcing risk)

- a clearer case for “what evidence moves the decision”

NICE’s methods guidance is explicit about evaluating cost effectiveness and the broader modelling approach expected in technology evaluations. (While Australia’s PBAC has its own processes, the discipline of representing uncertainty and scenario testing is widely shared across HTA systems.)

2) Portfolio prioritisation and resource allocation

Instead of arguing for budget based on the best case, you can compare:

- probability of meeting minimum hurdles

- expected value

- downside risk (tail probability of very poor outcomes)

3) Forecast credibility with senior leadership

Leadership skepticism about forecasts is rational. Simulation earns credibility because it is transparent:

- “Here are the assumptions, here is the uncertainty, here is what drives risk.”

- It also makes trade-offs explicit: narrow uncertainty is valuable, but it costs evidence, time, and money.

4) Stress testing competitive threats

You can represent competitor entry as:

- a random event time

- a random magnitude of share erosion

- a correlated effect on price and persistence

Those correlations matter. They are why real life breaks spreadsheet forecasts.

Common mistakes and how to avoid them

Mistake 1: “We ran Monte Carlo” but the distributions are fantasy

If the distributions are not evidence-based, you just simulated confidence.

Fix: anchor distributions in data (historical analogues, real-world evidence, expert elicitation with calibration).

Mistake 2: Too many variables, no insight

A model with 80 uncertain inputs is often a sign that the team has not decided what matters.

Fix: start with 8–15 variables, then expand only when sensitivity shows it is warranted.

Mistake 3: No governance for assumptions

Simulation outputs can look precise, which tempts teams to treat them as “the answer”.

Fix: treat the model as a decision tool with versioning, assumption logs, and review cycles.

Mistake 4: Ignoring ethics, transparency, and stakeholder trust

Advanced analytics can be misused to launder weak assumptions into authoritative-looking outputs.

Fix: disclose uncertainty ranges, show sensitivity drivers, and document the rationale for key priors. Guidance on modelling good research practices and uncertainty analysis reinforces transparency and appropriate reporting.

Why this matters now: the “model-informed” era is accelerating

Regulators are increasingly open to, and in some cases expect, well-justified modelling and simulation. The FDA’s MIDD program explicitly supports the development and application of quantitative models in drug development and regulatory review. EMA provides ongoing Q&As and technical guidance on modelling and simulation, including PBPK reporting expectations.

Commercially, the pace of competition and the scrutiny on value are rising. That pushes leaders toward tools that can:

- connect evidence to decision pathways

- quantify uncertainty credibly

- allocate investment based on probability-weighted value, not optimism

References

Baio, G. (2008) Probabilistic sensitivity analysis in health economics. UCL Research Paper.

Caro, J.J. et al. (2012) ‘Modeling Good Research Practices—Overview’, Value in Health.

Claxton, K. et al. (2005) ‘Probabilistic sensitivity analysis for NICE technology appraisal’, Health Economics.

Dahabreh, I.J. et al. (2016) Guidance for the Conduct and Reporting of Modeling and Simulation Studies in Health Research. Agency for Healthcare Research and Quality (AHRQ), NCBI Bookshelf.

European Medicines Agency (EMA) (2018) Guideline on reporting the results of physiologically-based pharmacokinetic (PBPK) modelling and simulation.

European Medicines Agency (EMA) (n.d.) Modelling and simulation: questions and answers.

Food and Drug Administration (FDA) (2026) Model-Informed Drug Development (MIDD) Paired Meeting Program.

Hill-McManus, D. et al. (2021) ‘Combining Model-Based Clinical Trial Simulation and Bayesian Methods to Optimise Trial Design’, CPT: Pharmacometrics & Systems Pharmacology.

Marshall, S.F. et al. (2013) ‘Modeling and Simulation to Optimize the Design and Interpretation of Phase III Studies’, Clinical Pharmacology & Therapeutics.

NICE (National Institute for Health and Care Excellence) (n.d.) Economic evaluation: NICE health technology evaluation methods.

ISPOR (n.d.) Model Parameter Estimation and Uncertainty (Outcomes Research Guidelines).

Chen, W. et al. (2024) ‘Understanding modelled economic evaluations’, Medical Journal of Australia.

Ready to explore further?

Let's discuss how these insights apply to your organisation.